Making the HTTP Archive faster

This week I finally got time to do some coding on the HTTP Archive. Coincidentally (ironically?) I needed to focus on performance. Hah! This turned out to be a good story with a few takeaways – info about the HTTP Archive, some MySQL optimizations, and a lesson learned about dynamic script loaders.

Setting the stage

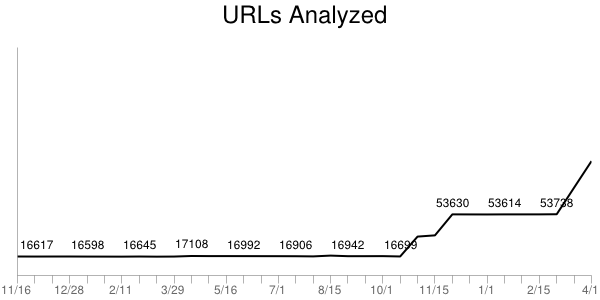

The HTTP Archive started in November 2010 by analyzing 10K URLs and storing their information (subresource URLs, HTTP headers, sizes, etc.) in a MySQL database. We do these runs twice each month. In November 2011 we began increasing the number of URLs to 25K, 50K, 75K, and finally hit 100K this month. Our goal is to hit 1M URLs by the end of 2012.

The MySQL schema in use today is by-and-large the same one I wrote in a few hours back in November 2010. I didn’t spend much time on it – I’ve created numerous databases like this and was able to quickly get something that got the job done and was fast. I knew it wouldn’t scale as the size of the archive and number of URLs grew, but I left that for another day.

That day had arrived.

DB schema

The website was feeling slow. I figured I had reached that curve in the hockey stick where my year-old schema that worked on two orders of magnitude less data was showing its warts. I saw plenty of slow queries in the log. I occasionally did some profiling and was easily able to identify queries that took 500 ms or more; some even took 10+ seconds. I’ve built big databases before and had some tricks up my sleeve so I sat down today to pinpoint the long poles in the tent and cut them down.

The first was pretty simple. The urls table has over 1M URLs. The only index was based on the URL string – a blob. It took 500-1000 ms to do a lookup. The main place this happens is looking up the URL’s rank, for example, in the last crawl Whole Foods was ranked 5,872 (according to Alexa). This is a fairly non-critical piece of information, so slowing down the page 500-1000 ms wasn’t acceptable. Plus this seems like a simple lookup ripe for optimizing.

When I described this problem to my Velocity co-chair, John Allspaw, he suggested creating a hash for the URL that would be faster to index. I understood the concept but had never done this before. I didn’t find any obvious pointers out there on “the Web” so I rolled my own. I started with md5(), but that produced a fairly long string that was alphanumeric (hex):

select md5("http://www.wholefoodsmarket.com/");

=> 0a0936fe5c690a3b468a6895efaaff83

I didn’t think it would be that much faster to index off the md5() hex string (although I didn’t test this). Assuming that md5() strings are evenly distributed, I settled on taking a substring:

select substring(md5("http://www.wholefoodsmarket.com/"), 1, 4);

=> 0a09

This was still hex and I thought an int would be a faster index (but again, I didn’t test this). So I added a call to conv() to convert the hex to an int:

select conv(substring(md5("http://www.wholefoodsmarket.com/"), 1, 4), 16, 10);

=> 2569

I was pretty happy. This maps URLs across 64K hashes. I’m assuming they’re evenly distributed. This conversion is only done a few times per page so the overhead is low. If you have a better solution please comment below, but overall I thought this would work – and it did! Those 500+ ms queries went down to < 1 ms. Yay!

But the page was still slow. Darn!

Duh – it’s the frontend

This and a few other MySQL changes shaved a good 2-3 seconds of the page load time but the page still felt slow. The biggest problem was rendering – I could tell the page arrived quickly but something was blocking the rendering. This is more familiar performance territory for me so I gleefully rolled up my sleeves and pulled out my WPO toolbox.

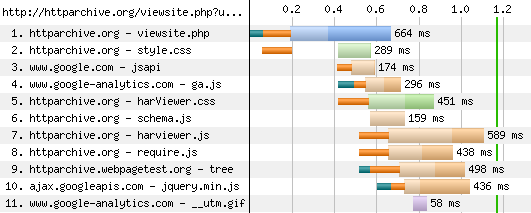

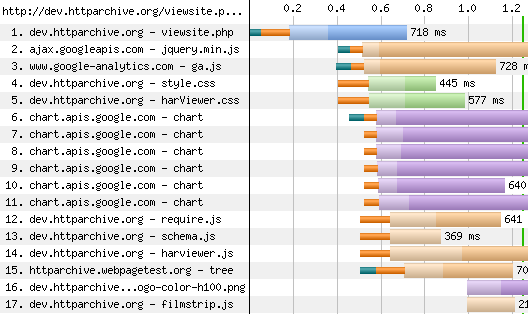

The page being optimized is viewsite.php. I used WebPagetest to capture a waterfall chart and screenshots for Chrome 18, Firefox 11, IE 8, and IE 9. The blocking behavior and rendering times were not what I consider high performance. (Click on the waterfall chart to go to the detailed WebPagetest results.)

These waterfall charts looked really wrong to me. The start render times (green vertical line) were all too high: Chrome 1.2 seconds, Firefox 2.6 seconds, IE8 1.6 seconds, and IE9 2.4 seconds. Also, too many resources were downloading and potentially blocking start render. This page has a lot of content, but most of the scripts are loaded asynchronously and so shouldn’t block rendering. Something was defeating that optimization.

Docwrite blocks

I immediately honed in on jquery.min.js because it was often in the critical path or appeared to push out the start render time. I saw in the code that it was being loaded using Google Libraries API. Here’s the code that was being used to load jquery.min.js:

<script src="http://www.google.com/jsapi"></script>

<script>

google.load("jquery", "1.5.1");

</script>

I’ve looked at (and built) numerous async script loaders and know there are a lot of details to get right, so I dug into the jsapi script to see what was happening. I saw the typical createElement-insertBefore pattern popularized by the Google Analytics async snippet. But upon walking through the code I discovered that jquery.min.js was being loaded by this line:

m.write('<script src="'+b+'" type="text/javascript"><\/script>'):

The jsapi script was using document.write to load jquery.min.js. While it’s true that document.write has some asynchronous benefits, it’s more limited than the createElement-insertBefore pattern. Serendipitously, I was just talking with someone a few weeks ago about deprecating the jsapi script because it introduces an extra HTTP request, and instead recommend that people just load the script directly. So that’s what I did.

We don’t need no stinkin’ script loader

In my case I knew that jquery.min.js could be loaded async, so I replaced the google.load code with this:

var sNew = document.createElement("script");

sNew.async = true;

sNew.src = "http://ajax.googleapis.com/ajax/libs/jquery/1.5.1/jquery.min.js";

var s0 = document.getElementsByTagName('script')[0];

s0.parentNode.insertBefore(sNew, s0);

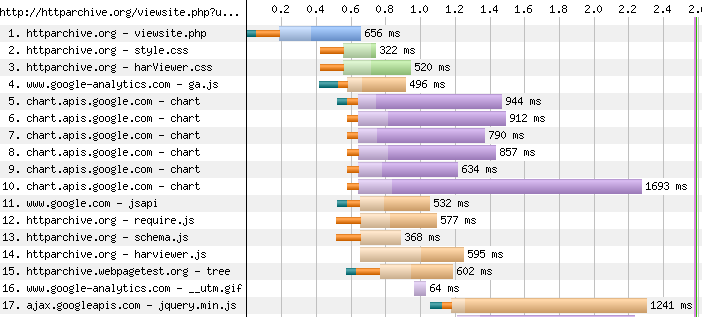

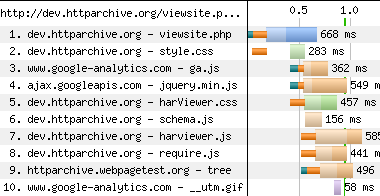

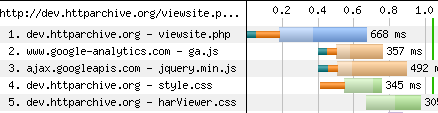

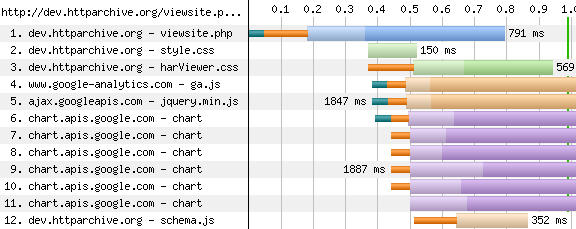

This made the start render times and waterfall charts look much better:

Chrome 18:

Firefox 11:

Internet Explorer 8:

Internet Explorer 9:

There was better parallelization of downloads and the start render times improved. Chrome went from 1.2 to 0.9 seconds. Firefox went from 2.6 to 1.3 seconds. IE8 went from 1.6 to 1.1 seconds. IE9 went from 2.4 to 1.0 seconds.

This was a fun day spent making the HTTP Archive faster. Even though I consider myself a seasoned veteran when it comes to web performance, I still found a handful of takeaways including some oldies that still ring true:

- Even for web pages that have significant backend delays, don’t forget to focus on the frontend. After all, that is the Performance Golden Rule.

- Be careful using script loaders. They have to handle diverse script loading scenarios across a large number of browsers. If you know what you want it might be better to just do it yourself.

- Be careful using JavaScript libraries. In this case

jquery.min.jsis only being used for the drop down About menu. That’s 84K (~30K compressed) of JavaScript for a fairly simple behavior.

If you’re curious about why document.write results in worse performance for dynamic script loading, I’ll dig into that in tomorrow’s blog post. Hasta mañana.

Paul Reinheimer | 09-Apr-12 at 7:59 pm | Permalink |

How are you creating the index? I’ve had great success with using a HASH rather than BTREE index in MySQL when I’m only going to be direct comparisons, rather than >, < , etc. I think it's a 5.1 feature, but it's helped it quite a bit.

lucas | 09-Apr-12 at 9:01 pm | Permalink |

will you update your book, souders?

Michael Hart | 09-Apr-12 at 10:34 pm | Permalink |

I’m confused about your hashing-as-an-index scheme. Considering that you’re reducing the space to 64k unique values and you’ve got (at least) 1M url rows, I’m guessing you query for the hash key and then sift through the 16+ rows you get back to find the actual one you’re looking for?

If that’s the case (and I might have just misunderstood what you were saying), is that really quicker than querying on a unique integer ID for each row? Even if it is, it doesn’t sound like it’ll scale very well.

Mark Rose | 09-Apr-12 at 11:37 pm | Permalink |

I have a few suggestions with regards to MySQL. I realize you’ll know most of these, and I’m repeating a lot of what you said above, but for others stumbling upon this, I hope they find it useful.

When I hash URLs, I use a SHA1 hash instead of MD5 to lessen the chance of a collision, but in your case that’s unlikely to be an issue.

I always store the raw binary output of the hashing function, not the hex output. The raw binary takes half as many characters. You’ve done the equivalent by using an integer for storage. This is a massive savings in both row and index space, and will have a huge impact on lookup performance if you’re memory constrained.

The advantage to using a BINARY column over an INT column is that you can do a prefix index. Being binary, just 3 characters will cover 256^3 or 16 million URL hashes, and 4 characters will cover 4 billion URL hashes. In the rare case the index isn’t enough, MySQL can quickly scan the few matching rows using only the index. If you’re using an integer, you have to index the full width of the integer, or, if you do the substring trick you did above, you have to do an additional match against the full URL. The latter complicates SQL queries, and every possible match using the latter causes the database page with the row to be pulled into memory, even if there is no match. This can create a huge amount of unnecessary IO. I think using a binary column is the way to go, especially when memory usage becomes a concern.

I would also consider using a binary-stored SHA1 hash as the primary key, especially for InnoDB, *if* you have fast IO. This will allow you to do fast inserts and updates instead of hammering the end of the table and dealing with lock contention as you would using a sequential integer primary key (of course, if your disks are slow, the random IO will result in worse performance). SHA1 basically ensures you never have a collision, whereas MD5 is insufficient (though MD5 may be okay in your case). There is some overhead for having a large primary key if you have secondary indexes, so if you’re looking for extremely fast URL hash to ID lookups, a separate table may be the way to go, and use a left or straight join as required.

If you’re using the substring/integer trick, and you get a lot of partial matches, and your data doesn’t fit in memory, upgrading to MySQL 5.6 will give you a big boost in performance (it fetches batches of matching rows instead of one at a time).

AUTO_INCREMENT is evil for concurrent insert performance. Don’t use it if possible.

If you decide to store the binary output, make sure to use a BINARY type for the column of the appropriate length (16/20), not VARBINARY (save at least 1 byte per row that way). If you use a CHAR/VARCHAR, be sure to use a binary encoding, and do all your comparisons using =, not LIKE, to avoid string conversion overhead (case insensitive matching is a performance killer).

I do the conversion on the application side to avoid ever having to use a function in the WHERE clause, as MySQL doesn’t support indexes on functions. Web or worker machines are easier to scale than database machines, so I move as much work away from the database as possible. As you’re using PHP, just call md5() or sha1() with the second argument being ‘true’. Both are very fast functions in PHP.

And on a personal note, Mr. Souders, you’ve been a huge inspiration, and I’ve found your work on end-user experience to be absolutely invaluable.

Cheers,

Mark

Mark Rose | 09-Apr-12 at 11:46 pm | Permalink |

I realize I made a mistake after hitting submit. Using a prefix index on a BINARY does force myself to pull the row when the prefix matches. My bad. Going with the BINARY is still the way to go though, because as the data set grows, it’s easier to change the index to a longer prefix than to recalculate the integers, and there’s no need for the second comparison in the SQL. The main point is that you really want the prefix index to be long enough to very rarely match more than a couple of rows.

Cheers,

Mark

Michael J. Ryan | 10-Apr-12 at 1:14 am | Permalink |

I don’t think it should be the primary key, as stated above, but do feel that keeping the entire SHA/MD5 set as a main lookup index would be helpful. You’re dropping about 75% of the data regarding the hash by converting it to an integer. 16bytes (binary) isn’t that big to index against, and if you use a lookup table will be even better wrt indexing as a foreign key.

As mentioned above, use BINARY(16) for storing the result as binary, not hex. You may also want to consider a database solution based around map/reduce… There are a number of non-relational databases that could suit your needs. Including Hadoop, CouchDB, MongoDB and Redis. Each has distinct advantages and disadvantages. The biggest advantage is being able to scale horizontally, which becomes more of an issue, the more data you are attempting to manipulate.

That said, nice catch on the docwrite, I’ve found a few issues in various scripts from the goog, especially when combined with other scripts… like es5shim under ie8, etc. Google often uses for..in without checking hasOwnProperty, so the shim’s methods case the google scripts to blow up in IE8.

on the plus side, to library scripts like jquery, using a common CDN endpoint at least increases the likelyhood of a cache hit… I do wish the common CDNs were a bit more comprehensive though.

Morgan Tocker | 10-Apr-12 at 4:52 am | Permalink |

Quoting from the text:

—

I didn’t find any obvious pointers out there on “the Web†so I rolled my own.

—

This is actually covered in High Performance MySQL (page 103 in the 2nd edition, page 154 in the 3rd edition). What the authors suggests is:

CREATE TABLE urls (

id int not null primary key auto_increment,

url varchar(255) NOT NULL,

url_crc32 INT UNSIGNED NOT NULL,

..

);

Where queries are rewritten to:

SELECT * FROM urls WHERE url_crc32=CRC(‘blah’) and url = ‘blah’;

The first part of the query uses the index, the second part avoids hash collisions.

Emil Vikström | 10-Apr-12 at 6:17 am | Permalink |

Paul Reinheimer, note that HASH indices is supported by the MEMORY and NDB storage engines only. A HASH index declaration for InnoDB and MyISAM will be ignored and you end up with a BTREE anyway. BTREE indices are, however, very close to HASH ones since the tree height is very small in most cases (due to large branching on each level).

Steve Souders | 10-Apr-12 at 11:21 pm | Permalink |

@Paul: create index urlhash on urls (urlhash);

@lucas: I’d love to update my books if there’s time. The downsides of document.write are already described in Even Faster Web Sites.

@MichaelH: Yes, I have to search on the urlhash AND the url itself. The performance and scaling are pretty good for now. If I go above 1M URLs it could become an issue.

@Mark: Great great comments. I agree about the complexity of the SQL (a la Michael’s comment as well). I’ll look into BINARY. Really appreciate the point about moving functions from SQL to PHP. I thought about that and will move it over to the app side. And thanks for the kind words! Wow! I’d love help on the MySQL side of HTTP Archive. ;-)

@MichaelR: Thanks for the additional tips on BINARY. There is a significant critical mass effect – not huge but noticeable: Sites using Google Libraries API.

@Morgan: I sat down with Jeremy Zawodny for tips before writing High Performance Web Sites and borrowed the title pattern from him. Great book (including the new editions)!

Jeff Costa | 11-Apr-12 at 2:18 pm | Permalink |

Steve: Another option might be to pull your JQuery libraries from a CDN like Cloudflare. They maintain a free, fast set of libraries you can link into your pages which might give you another speed bump. Here’s the list of what they offer: http://www.cdnjs.com/#/search/#ui

Pawel Urbanski | 13-Apr-12 at 4:57 am | Permalink |

Hi Steeve and others,

MySQL 5.5 and higher have the partitioning feature. You could partition the data by some key or a hash.

Another option would be to use Percona’s MySQL, which by many sources is faster. They are at: http://www.percona.com/ – if anyone wants to have a look.

There are also column-oriented MySQL editions such as InfoBright. The storage requrements are very often 8 or 10 times smaller. They have some specific hashing features for fast querying. These MySQL editions are built for data warehousing loads. If the data requirements would be very much smaller then you could get an ssd disk or two.

Greetings,

Pawel Urbanski

robzilla | 24-Apr-12 at 1:00 pm | Permalink |

“That’s 84K (~30K compressed) of JavaScript for a fairly simple behavior” — then why not get rid of it, I wonder, when with a few lines of pure CSS you can achieve the same effect and shave off ~82/29K? It amazes me how easily people sprinkle a bit of jQuery here and there nowadays, and I have no doubt it’s the one biggest influence on that scary upward trend in the JS Transfer Sizes chart.

Steve Souders | 24-Apr-12 at 3:21 pm | Permalink |

@robzilla: In fact I did that on that simple page. But there are other pages that use jQuery for much more complex functionality that isn’t easy to rewrite from scratch. But I totally resonate with your concern that people might be over eager to just throw jQuery into the mix instead of writing a small amount of raw code. I’m currently investigating a way we could automate the detection and correction of such a situation.

Charlie Clark | 25-May-12 at 12:43 pm | Permalink |

Don’t optimise prematurely!

I’m not familiar with the code base but I do use the exported data. Thanks for a fantastic resource.

The first thing to look at when trying to optimise database-driven websites is the number of connections and queries to the database involved in returning a page. It can easily take 500 ms to get a connection and cursor.

I think it is essential to normalise the database first, match types to data, add essential indices, profile, add and tweak indices. If necessary you can then add ancillary tables or views for calculated results but you should always be aiming to let the database query planner do its job. Give the database server as much memory as it needs and configure it accordingly.

Even with a million rows the URLs table shouldn’t be a problem on modern hardware with the correct indices. An index should never be a blob, simply giving the candidate key the right size varchar should be an improvement. This can be refined to a binary value primary key (plus constraint) if necessary. Unfortunately I don’t find EXPLAIN on MySQL anything near as helpful as on Postgres.

If your database has relations between tables then use a backend that supports and enforces foreign keys. You can always disable them for batch processing but otherwise they can help you identify denormalised and, thus, inefficient data structures. As noted above drop auto_increment where you already have reasonable candidate keys. Better to look for better indices than inappropriate surrogates.

Keep your data-processing on the client to a minimum unless there is a particular function that you know you only need to apply to the result set – date time formatting springs to mind.

You can probably replace the JQuery entirely using the Superfish CSS dropdown navigation.