newtwitter performance analysis

Among the exciting launches last week was newtwitter – the amazing revamp of the Twitter UI. I use Twitter a lot and was all over the new release, and so was stoked to see this tweet from Twitter developer Ben Cherry:

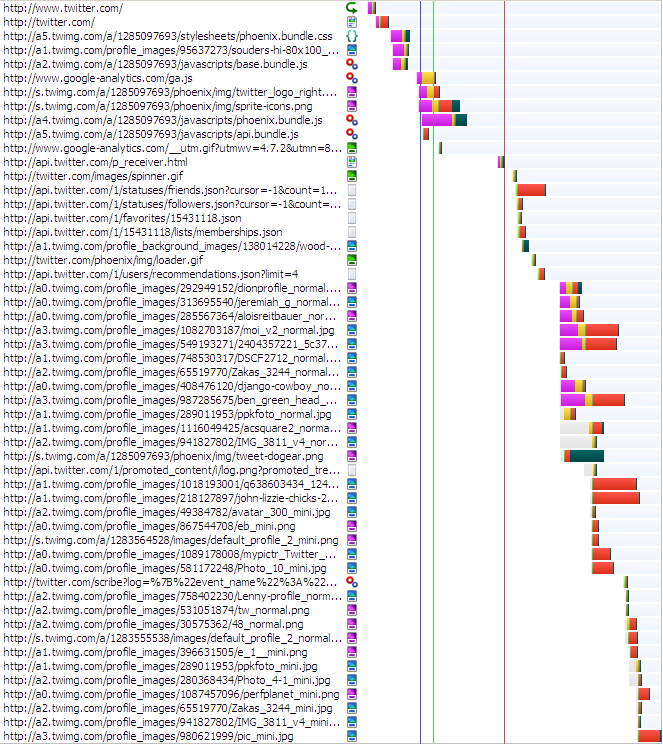

The new Twitter UI looks good, but how does it score when it comes to performance? I spent a few hours investigating. I always start with HTTP waterfall charts, typically generated by HttpWatch. I look at Firefox and IE because they’re the most popular browsers (and I use a lot of Firefox tools). Here’s the waterfall chart for Firefox 3.6.10:

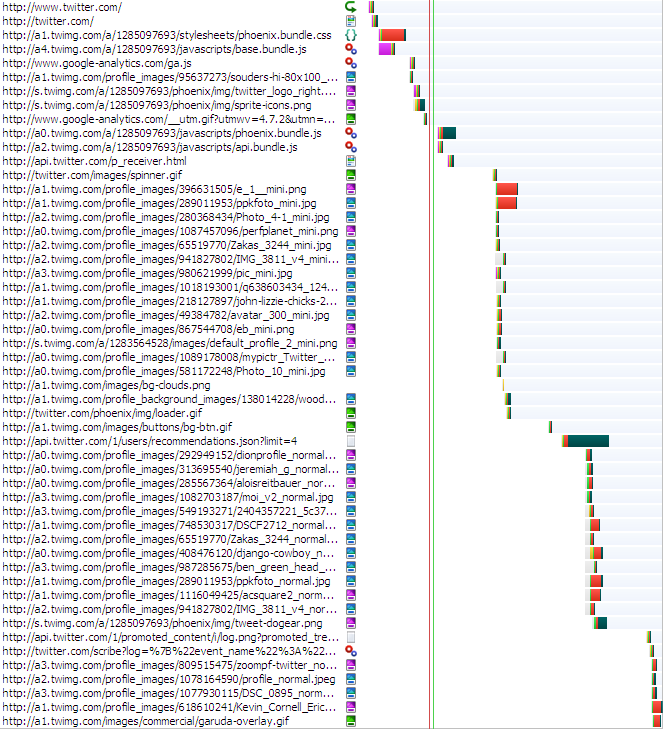

I used to look at IE7 but its market share is dropping, so now I start with IE8. Here’s the waterfall chart for IE8:

From the waterfall charts I generate a summary to get an idea of the overall page size and potential for problems.

| Firefox 3.6.10 | IE8 | |

|---|---|---|

| main content rendered | ~4 secs | ~5 secs |

| window onload | ~2 secs | ~7 secs |

| network activity done | ~5 secs | ~7 secs |

| # requests | 53 | 52 |

| total bytes downloaded | 428 kB | 442 kB |

| JS bytes downloaded | 181 kB | 181 kB |

| CSS bytes downloaded | 21 kB | 21 kB |

I study the waterfall charts looking for problems, primarily focused on places where parallel downloading stops and where there are white gaps. Then I run Page Speed and YSlow for more recommendations. Overall newtwitter does well, scoring 90 on Page Speed and 86 on YSlow. Combining all of this investigation results in this list of performance suggestions:

- Script Loading – The most important thing to do for performance is get JavaScript out of the way. “Out of the way” has two parts:

- blocking downloads – Twitter is now using the new Google Analytics async snippet (yay!) so ga.js isn’t blocking. However, base.bundle.js is loaded using normal SCRIPT SRC, so it blocks. In newer browsers there will be some parallel downloads, but even IE9 will block images until the script is done downloading. In both Firefox and IE it’s clear this is causing a break in parallel downloads. phoenix.bundle.js and api.bundle.js are loaded using LABjs in conservative mode. In both Firefox and IE there’s a big block after these two scripts. It could be LABjs missing an opportunity, or it could be that the JavaScript is executing. But it’s worth investigating why all the resources lower in the page (~40 of them) don’t start downloading until after these scripts are fetched. It’d be better to get more parallelized downloads.

- blocked rendering – The main part of the page takes 4-5 seconds to render. In some cases this is because the browser won’t render anything until all the JavaScript is downloaded. However, in this page the bulk of the content is generated by JS. (I concluded this after seeing that many of the image resources are specified in JS.) Rather than download a bunch of scripts to dynamically create the DOM, it’d be better to do this on the server side as HTML as part of the main HTML document. This can be a lot of work, but the page will never be fast for users if they have to wait for JavaScript to draw the main content in the page. After the HTML is rendered, the scripts can be downloaded in the background to attach dynamic behavior to the page.

- scattered inline scripts – Fewer inline scripts are better. The main reason is that a stylesheet followed by an inline script blocks subsequent downloads. (See Positioning Inline Scripts.) In this page, phoenix.bundle.css is followed by the Google Analytics inline script. This will cause the resources below that point to be blocked – in this case the images. It’d be better to move the GA snippet to the SCRIPT tag right above the stylesheet.

- Optimize Images – This one image alone could be optimized to save over 40K (out of 52K): http://s.twimg.com/a/1285108869/phoenix/img/tweet-dogear.png.

- Expires header is missing – Strangely, some images are missing both the Expires and Cache-Control headers, for example, http://a2.twimg.com/profile_images/30575362/48_normal.png. My guess is this is an origin server push problem.

- Cache-Control header is missing – Scripts from Amazon S3 have an Expires header, but no Cache-Control header (eg http://a2.twimg.com/a/1285097693/javascripts/base.bundle.js). This isn’t terrible, but it’d be good to include Cache-Control: max-age. The reason is that Cache-Control: max-age is relative (“# of seconds from right now”) whereas Expires is absolute (“Wed, 21 Sep 2011 20:44:22 GMT”). If the client has a skewed clock the actual cache time could be different than expected. In reality this happens infrequently.

- redirect – http://www.twitter.com/ redirects to http://twitter.com/. I love using twitter.com instead of www.twitter.com, but for people who use “www.” it would be better not to redirect them. You can check your logs and see how often this happens. If it’s small (< 1% of unique users per day) then it’s not a big problem.

- two spinners – Could one of these spinners be eliminated: http://twitter.com/images/spinner.gif and http://twitter.com/phoenix/img/loader.gif ?

- mini before profile – In IE the “mini” images are downloaded before the normal “profile” images. I think the profile images are more important, and I wonder why the order in IE is different than Firefox.

- CSS cleanup – Page Speed reports that there are > 70 very inefficient CSS selectors.

- Minify – Minifying the HTML document would save ~5K. Not big, but it’s three roundtrips for people on slow connections.

Overall newtwitter is beautiful and fast. Most of the Top 10 web sites are using advanced techniques for loading JavaScript. That’s the key to making today’s web apps fast.

Jos Hirth | 22-Sep-10 at 11:13 pm | Permalink |

301 redirects (permanently moved) are cached by browsers. But I’m not really sure if that piece of information survives a browser restart.

If it does, that redirect won’t really hurt – even if half of the users would manually type that “www” each time.

Hm… browsers should probably rewrite bookmarks if they hit a 301. I mean, that would make lots of sense, wouldn’t it?

Jesse Ruderman | 22-Sep-10 at 11:20 pm | Permalink |

By redirecting, twitter ensures that the vast majority of visitors always hit the no-www hostname, which improves cache hit rates. If some links to twitter used www and others did not, users would get a lot more cache misses.

I’m curious if the resource-blocking behaviors have changed in Firefox 4.

Simon Willison | 23-Sep-10 at 3:18 am | Permalink |

Gotta disagree on the HTTP redirect issue – it may offer a tiny performance advantage, but having only one canonical URL for a page on a site is much better for your site’s general health, and the health of the overall web ecosystem.

It’s programatically very difficult to be absolutely sure that http://www.twitter.com/simonw and twitter.com/simonw point to the same page, which causes problems with tools like delicious.com due to some users linking to one variant and others linking to the other.

sajal | 23-Sep-10 at 4:16 am | Permalink |

Agree with Simon on the redirect issue… while google maybe able to guess that both pages are the same… its always better to inform them with a 301 redirect.

This would prevent googlebot(and others) to crawl both versions and generate additional load/traffic for twitter… and generate more whales…

Sergey Chernyshev | 23-Sep-10 at 6:18 am | Permalink |

@Steve Agree completely that rendering using JS is often a mistake and plain HTML (with minimal inline CSS maybe) is very good thing to have as it’s the fastest thing out there ;) Then load CSS then JS to augment data and behaviors (better after user starts to read the page).

I often add WebPageTest.org’s screenshot sequence and video to the mix to help people visualize what’s going on. It helps a lot to see when page actually makes sense to a human brain – quite hard to catch this event without custom events (even then it’s quite hard). It might be hard (without custom setup) to do WebPageTest on sites where auto is required though like in this case (don’t know if scripting would solve the problem)

Now, I’m thinking, maybe we should have some of the Meet for SPEED members analyze some popular sites instead of their own and publish it for people to see ;)

@Jesse I disagree regarding the cache misses – HTML is not cached most likely anyway, but the rest of the assets should be loaded from CDNs in any case, so main domain name doesn’t matter really. Which means that cost of extra DNS lookup, connection, request and response should probably go away.

@Simon it’s always a balance and is not a universal rule, but considering that most of the tools on the net don’t do canonical resolution or even update on 301, I would agree that it’s too early to remove redirects for all cases.

Ben Cherry | 23-Sep-10 at 3:29 pm | Permalink |

Thanks for doing this analysis, Steve! Lots of good suggestions we’re going to begin implementing soon. Hopefully things will get even faster :)

Steve Souders | 23-Sep-10 at 4:34 pm | Permalink |

Some smart people have disagreed with my redirect suggestion. That’s definitely a valid point, but let me relay some more details about this issue.

First, redirects are not cached in most cases. In response to Jos’s comment, redirect caching is primarily based on the expires headers (and less so on the status code – 301, 302, etc.). It turns out that browsers do not cache redirects in a majority of the situations in which they should. See Redirect caching deep dive and click on the results table.

Given that redirects aren’t cached, they are a recurring performance problem. One good thing in this situation is that popular search engines point to twitter.com (without “www”). But a tricky thing here is that I believe most users think “www.” is the right format for URLs (I have no data on this tho), so if they manually enter the URL they’ll get redirected. Google also redirects (I wish it didn’t but nevertheless) but it redirects from google.com to http://www.google.com. In this case Twitter is redirecting from the more typical IMO URL (www.twitter.com) to the less typical (twitter.com). Given that this URL doesn’t have caching headers and even if it did is most likely not going to be cached means that it happens more frequently than going the other way (from non-www to www).

I’d like feedback on how problematic supporting both pages is for caching and search engines. I think it would be possible (base href?) to use relative URLs for resources so they always came from one domain (“twitter.com” vs “www.twitter.com”). And all links would then also be on the single preferred domain. I also believe cookies will work across the non-www and www domains.

I’ll definitely give ground that the redirect should be left in place, but it also depends on the frequency. If < 1% of unique users hit that redirect once a day, fine - leave it. If it's > 10% it should be fixed. As with most problems like this, the current state of the world is in that middle ground where the decision is trickier.

@BCherry – Would you be willing to share the %age of unique users that hit this redirect daily?

dalin | 23-Sep-10 at 10:39 pm | Permalink |

“I’ll definitely give ground that the redirect should be left in place, but it also depends on the frequency. If 10% it should be fixed. As with most problems like this, the current state of the world is in that middle ground where the decision is trickier.”

I’m not sure it’s this simple. I think you’ve got to pick one from the day you launch your domain and stick with it. If twitter were to all of a sudden change to www then not only have they got to deal with a load of redirects from the other direction, they will also see their page rank significantly drop until Google catches up.

Personally I think the web would be a simpler place if there were no www so I always set my clients up without it unless their previous site used specifically with.

P.S. Your CAPTCHA is interesting. I’m not sure if “four plus tin” is 14, 4, fourtin, infinity, or what.

Jos Hirth | 25-Sep-10 at 9:56 am | Permalink |

Thanks for the reply, Steve. Of course you also did an in-depth analysis in the past… I should have seen that coming. ;)

There is one thing I’d like to point out though: You have to redirect no-www to www if you’d like to use a cookie-less subdomain for your static content (which is a good idea for smaller sites which use cookies). As far as cookies are concerned, having no subdomain at all is like a wildcard.

There is also , which should at least address the search engine concerns of having www and no-www without a redirect.

Well, I for one will keep my www to no-www 301 redirects. More than 99% of my traffic comes from search engines and social news/bookmarking sites. Virtually no one gets redirected ever.

Jos Hirth | 25-Sep-10 at 10:00 am | Permalink |

That should have been: “There is also <link rel=”canonical” href=”…”/> […]”.

(There should be a hint that HTML tags are stripped. I also don’t know if entities will work. Well, I’ll see.)

Ben Cherry | 25-Sep-10 at 11:19 am | Permalink |

@souders – Sorry, I can’t share that number :)

We’ll take this advice seriously, and if it’s a large enough percentage, I’m sure we’ll look into your solutions.

Eric Danielson | 27-Sep-10 at 1:02 pm | Permalink |

A couple minor points:

– In your mention of scattered inline scripts, you link to “https://stevesouders.com/2009/05/06/positioning-inline-scripts/” but that is a dead link.

– I’ve primarilly worked with Fiddler, so it could be due to a lack of familiarity with HTTP Watch’s waterfall charts, but it looks like at some points you have >2 simultaneous downloads from the same domain in Firefox. Were you using the default network

.http.max-persistent-connections-per-server value of 2?

Steve Souders | 27-Sep-10 at 1:14 pm | Permalink |

@Eric: I fixed the link – thanks! Firefox 3+ does 6 persistent connections per server as shown in Browserscope. Be careful using Fiddler – it changes the number of connections because it’s a proxy.

Tina Cartigan | 26-Oct-10 at 7:15 am | Permalink |

I think your waterfall charts are beautiful, but I simply don’t have the same positive experience with the new Twitter that you seem to have.

The new Twitter, for me, is dog-slow. Scripts take forever to run; the UI is far too wide; and in Firefox, I’ve noticed that sites in adjacent tabs are having rendering issues when Twitter is opened. What’s that all about?

I’m on a new computer, running Windows 7.

Sudhee | 26-Oct-10 at 10:49 pm | Permalink |

Along the same lines, would you be able to analyze new Y!Mail beta in terms of performance – http://features.mail.yahoo.com/

We’re still doing lot of work to improve it!