UA Profiler improvements

UA Profiler is the tool I released 3 months ago that tracks the performance traits of various browsers. It’s a community-driven project – as more people use it, the data has more coverage and accuracy. So far, 7000 people have run 10,000 tests across 150 different browser versions (2500 unique User Agents). Over the past week (since my Stanford class ended), I’ve been adding some requested improvements.

- drilldown

-

Previously, I had one label for a browser. For example, Firefox 3.0 and 3.1 results were all lumped under “Firefox 3”. This week I added the ability to drilldown to see more detailed data. The results can be viewed in five ways:

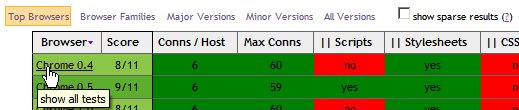

- Top Browsers – The most popular browsers as well as major new versions on the horizon.

- Browser Families – The full list of unique browser names: Android, Avant, Camino, Chrome, etc.

- Major Versions – Grouped by first version number: Firefox 2, Firefox 3, IE 6, IE7, etc.

- Minor Versions – Grouped by first and second version numbers: Firefox 3.0, Firefox 3.1, Chrome 0.2, Chrome 0.3, etc.

- All Versions – At most I save three levels of version numbers. Here you can see Firefox 3.0.1, Firefox 3.0.2, Firefox 3.0.3, etc.

- hiding sparse data

- The result tables grew lengthy due to unusual User Agent strings with atypical version numbers. These might be the result of nightly builds or manual tweaking of the User Agent. Now, I only show browsers tested by at least two different people a total of four or more times. If you want to see all browsers, regardless of the amount of testing, check “show sparse results” at the top.

- individual tests

- Several people asked to see the individual test results, that is, each test that was run for a certain browser. There were several motivations: Was there much variation for test X? What were the exact User Agent strings that were matched to this browser? When were the tests done (because that problem was fixed on such-and-such a date)? When looking at a results table, clicking on the Browser name will open a new table that shows the results for each test under that browser.

- sort

- Once I sat down to do it, it took me ~5 minutes to make the results table sortable using Stuart Langridge’s sorttable. Now you can sort to your heart’s content. (This weekend I’ll write a post about how I made his code work when loaded asynchronously using a variation of John Resig’s Degrading Script Tags pattern.)

UA Profiler has been quite successful, gathering a consistent amount of testing each day. I especially enjoy seeing people running nightly builds against it. It’s fun to look at the individual tests and get some visibility into how a browser’s code base evolves. For example, looking at the details for Chrome 1.0, we see that Chrome 1.0.154 failed the “Parallel Scripts” test, but Chrome 1.0.155 passed. Looking at the User Agent strings we see that Chrome 1.0.154 was built using WebKit 525, whereas Chrome 1.0.155 upgraded to WebKit 528. The upgrade in WebKit version was the key to attaining this important performance trait.

I also know of at least one case of a browser regression that the development team fixed because it was flagged by UA Profiler. This is an amazing side effect of tracking these performance traits – actually helping browser teams improve the performance of their browsers, the browsers that you and I use every day. My next task is to improve the tests in UA Profiler. I’ll work on that. Your job is to keep running your favorite browser (and mobile web device!) through the UA Profiler test suite to highlight what’s being done right, and what more is needed to make our web experience fast by default.

Lenny Rachitsky | 20-Dec-08 at 1:44 pm | Permalink |

This could be a good tool for monitoring companies to run their browsers through to see how they stack up.