Silk, iPad, Galaxy comparison

In my previous blog post I announced Loadtimer – a mobile test harness for measuring page load times. I was motivated to create Loadtimer because recent reviews of the Kindle Fire lacked the quantified data and reliable test procedures needed to compare browser performance.

Most performance evaluations of Silk that have come out since its launch have two conclusions:

- Silk is faster when acceleration is turned off.

- Silk is slow compared to other tablets.

Let’s poke at those more rigorously using Loadtimer.

Test Description

In this test I’m going to compare the following tablets: Kindle Fire (with acceleration on and off), iPad 1, iPad 2, Galaxy 7.0, and Galaxy 10.1.

The test is based on how long it takes for web pages to load on each device. I picked 11 URLs that are top US websites:

- http://www.yahoo.com/

- http://www.amazon.com/

- http://en.wikipedia.org/wiki/Flowers

- http://www.craigslist.com/

- http://www.ebay.com/

- http://www.linkedin.com/

- http://www.bing.com/search?q=flowers

- http://www.msn.com/

- http://www.engadget.com/

- http://www.cnn.com/

- http://www.reddit.com/

Some popular choices (Google, YouTube, and Twitter) weren’t selected because they have framebusting code and so don’t work in Loadtimer’s iframe-based test harness.

The set of 11 URLs were loaded 9 times on each device. The set of URLs was randomized for each run. All the tests were conducted on my home wifi over a Comcast cable modem. (Check out this photo of my test setup.) All the tests were done at the same time of day over a 3 hour period. I did one test at a time to avoid bandwidth contention, and rotated through the devices doing one run at a time. I cleared the cache between each run.

Apples and Oranges

The median page load time for each URL on each device is shown in the Loadtimer Results page. It’s a bit complicated to digest. The fastest load time is shown in green and the slowest is red – that’s easy. The main complication is that not every device got the same version of a given URL. Cells in the table that are shaded with a gray background were cases where the device received a mobile version of the URL. Typically (but not always) the mobile version is lighter than the desktop version (fewer requests, fewer bytes, less JavaScript, etc.) so it’s not valid to do a heads up comparison of page load times between desktop and mobile versions.

Out of 11 URLs, the Galaxy 7.0 received 6 that were mobile versions. The Galaxy 10.1 and Silk each received 2 mobile versions, and the iPads each had only one mobile version across the 11 URLs.

In order to gauge the difference between the desktop and mobile versions, the results table shows the number of resources in each page. eBay, for example, had 64 resources in the desktop version, but only 18-22 in the mobile version. Not surprisingly, the three tablets that received the lighter mobile version had the fastest page load times. (If a mobile version was faster than the fastest desktop version, I show it in non-bolded green with a gray background.)

This demonstrates the importance of looking at the context of what’s being tested. In the comparisons below we’ll make sure to keep the desktop vs mobile issue in mind.

Silk vs Silk

Let’s start making some comparisons. The results table is complicated when all 6 rows are viewed. The checkboxes are useful for making more focused comparisons. The Silk (accel off) and Silk (accel on) results show that indeed Silk performed better with acceleration turned off for every URL. This is surprising, but there are some things to note.

First, this is the first version of Silk. Jon Jenkins, Director of Software Development for Silk, spoke at Velocity Europe a few weeks back. In his presentation he shows different places where the split in Silk’s split architecture could happen (slides 26-28). He also talked about the various types of optimizations that are part of the acceleration. Although he didn’t give specifics, it’s unlikely that all of those architectural pieces and performance optimizations have been deployed in this first version of Silk. The test results show that some of the obvious optimizations, such as concatenating scripts, aren’t happening when acceleration is on. I expect we’ll see more optimizations rolled out during the Silk release cycle, just as we do for other browsers.

A smaller but still important issue is that although the browser cache was cleared between tests, the DNS cache wasn’t cleared. When acceleration is on there’s only one DNS lookup needed – the one to Amazon’s server. When acceleration is off Silk has to do a DNS lookup for every unique domain – an average of 13 domains per page. Having all of those DNS lookups cached gives an unfair advantage to the “acceleration off” page load times.

I’m still optimistic about the performance gains we’ll see as Silk’s split architecture matures, but for the remainder of this comparison we’ll use Silk with acceleration off since that performed best.

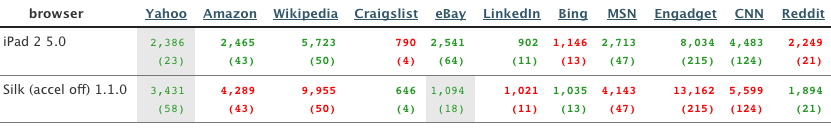

Silk vs iPad

I had both an iPad 1 and iPad 2 at my disposal so included both in the study. The iPad 1 was the slowest across all 11 URLs so I restricted the comparison to Silk (accel off) and iPad 2.

The results are mixed with iPad 2 being faster for most but not all URLs. The iPad 2 is fastest in 7 URLs. Silk is fastest in 3 URLs. One URL (eBay) is apples and oranges since Silk gets a mobile version of the site (18 resources compared to 64 resources for the desktop version).

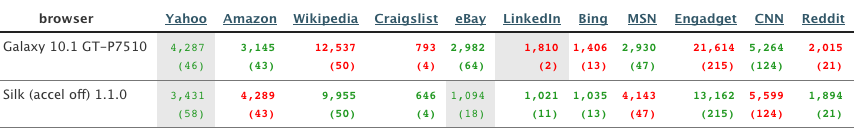

Silk vs Galaxy

Comparing the Galaxy 7.0 to any other tablet is not fair since Galaxy 7.0 receives a lighter mobile version in 6 of 11 URLs. The Galaxy 7.0 has the slowest page load time in 3 of the 4 URLs where it, Galaxy 10.1, and Silk all receive the desktop version. Since it’s slower head-to-head and has mobile versions in the other URLs, I’ll focus on comparing Silk to the Galaxy 10.1.

Silk has the fastest page load time in 7 URLs. The Galaxy 10.1 is faster in 3 URLs. One URL is mixed as Silk gets a mobile version (18 resources) while the Galaxy 10.1 gets a desktop version (64 resources).

Takeaways

These results show that, as strange as it might sound, Silk appears to be faster when acceleration is turned off. Am I going to turn off acceleration on my Kindle Fire? No. I don’t want to miss out on the next wave of performance optimizations in Silk. The browser is sound. It holds its own compared to other tablet browsers. Once the acceleration gets sorted out I expect it’ll do even better.

More importantly, it’s nice to have some real data and to have Loadtimer to help with future comparisons. Doing these comparisons to see which browser/tablet/phone is fastest makes for entertaining reading and heated competition. But all of us should expect more scientific rigor in the reviews we read, and push authors and ourselves to build and use better tools for measuring performance. I hope Loadtimer is useful. Loadtimer plus pcapperf and the Mobile Perf bookmarklet are the start of a mobile performance toolkit. Between the three of them I’m able to do most of what I need for analyzing mobile performance. It’s still a little clunky, but just as it happened in the desktop world we’ll see better tools with increasingly powerful features across more platforms as the industry matures. It’s still early days.

Guypo | 01-Dec-11 at 11:41 am | Permalink |

Interesting indeed.

I agree that it doesn’t really make sense that the accelerated version would be slower, since it addresses bottlenecks we know exist. Perhaps it’s indeed the first version aspect, we’ll see how it evolves.

Re Silk vs. iPad, it’s worth noting that when Silk was faster, it was faster by very little, while when iPad was faster, it was often much faster. A quick calculation shows that on the 3 sites Silk was faster, it was faster by an average of ~200ms, while on the 6 sites the iPad was faster, it was faster by an average of 2.3 seconds.

This may mean the heavier pages, which IMO are the most common pages for tablets, perform significantly worse on Silk, much more than what the initial analysis shows.

Perhaps it’s worth running this analysis on a set of less high-end websites, which match the average page sizes as shown in the HTTP Archive.

Big Daddy | 01-Dec-11 at 1:51 pm | Permalink |

The javascript-based test convention here may be flawed since Safari is known to fire its onload event prior to the typical convention of loading, rendering, laying out, and reflowing. Since both Silk & Galaxy use Android webkit I wouldn’t expect much variance in their onload firing, but it is probably worth revisiting the results for iPad2.

http://www.howtocreate.co.uk/safaribenchmarks.html

Steve Souders | 01-Dec-11 at 2:23 pm | Permalink |

@Guypo: Yes, it seems like Silk does better on the websites with fewer resources. I encourage you and others to run more tests that interest you. I’ll add a feature to Loadtimer to make that possible – probably a “test name” field that you can enter to separate your data into a personalized table.

@Big Daddy: I was unable to produce the problem you cite in that article. Can you reproduce it? If so, please send the steps to do so. Right now I think that article is in error. Or at best is unclear on the problem it’s describing.

klkl | 01-Dec-11 at 3:54 pm | Permalink |

How does it compare with Opera Mobile with Turbo? (which is similar to Silk) or Opera Mini (sort-of like Silk with a dumb terminal)?

Big Daddy | 01-Dec-11 at 9:59 pm | Permalink |

I’m able to reproduce the Safari discrepancy by looking at the state of the last website to load when the “Done” alert appears. I tried putting yahoo.com and craigslist.com as the last entry in the list and this is what I saw:

Craigslist

-on iPad, when the Done alert appears the craigslist display is completely blank!

http://dl.dropbox.com/u/27420522/loadtimer/iPad_craigslist.jpg

-on MacOS Firefox the page is fully visible when the Done alert appears

http://dl.dropbox.com/u/27420522/loadtimer/Firefox_craigslist.png

Yahoo

-on iPad, the top most Search box, Sign in banner, and main image have not even rendered when the Done alert appears

http://dl.dropbox.com/u/27420522/loadtimer/iPad_yahoo.jpg

-on MacOS Firefox all of the aforementioned are completely rendered

http://dl.dropbox.com/u/27420522/loadtimer/Firefox_yahoo.png

I didn’t have a chance to test on more platforms but this evidence seemed pretty compelling. Thoughts?

Markus Leptien | 02-Dec-11 at 5:04 am | Permalink |

I am wondering about the influence of CPU Power… As I saw just recently, that even in a Desktop Environment CPU Power can have a major impact on Web Performance:

http://www.webpagetest.org/result/111202_RN_2C9Q6/1/details/

vs.

http://www.webpagetest.org/result/111202_2X_2C9S6/1/details/

(Look at the CPU utilization)

I wonder how the comparison (of the protocols) would look like in a (CPU)-normalized environment…

Very interesting stuff…

Craig Hyde | 02-Dec-11 at 7:46 am | Permalink |

It actually makes sense that, for these sites, the Silk browser would be slower with acceleration turned on…

While there is a lot of magic in the presentation of their optimization techniques, the main 2 algorithms (as far as I can tell) are image compression and an Amazon managed CDN.

These sites are some of the largest on the web, and thus already have advanced content delivery networks in play. I’d have to imagine, they are more geographically distributed and optimized than Amazon’s brand new CDN infrastructure. By going through the Silk acceleration to view these sites, it’s likely that you’re adding an additional bottleneck to their delivery.

It would be interesting to test the Silk acceleration on less popular sites, those that don’t already have all their content pushed out through the delivery networks like Akamai.

Steve Souders | 02-Dec-11 at 10:40 am | Permalink |

@klkl: Now that Loadtimer is available people can use it to do any comparisons they want. I encourage you to test Opera Mobile & Mini – that would be very interesting.

@Markus: CPU charts for mobile devices would be awesome but aren’t currently available. Someday…

@Craig: That’s a great point. Similar to @klkl I encourage you use Loadtimer to do that comparison. (But I might poke at it too.)

Steve Souders | 02-Dec-11 at 11:02 am | Permalink |

@Big Daddy: There are two issues that are being confused here. The article you cite says Safari fires the onload event too soon – I’m still certain that’s not true. I’ve run several tests and it always fires at the appropriate time compared to other browsers.

The confusion comes because the sites you’re looking at have dynamic behavior that happens after the onload event. Here’s a waterfall chart for yahoo.com showing that the onload fires at 2.9 seconds, but ~20 scripts and images are downloaded after that point.

This activity after the onload event affects rendering. Looking at the filmstrip of screenshots at the top of that yahoo.com waterfall page, we see that after the onload fires the code and resources that run later alter the banner across the top and render the ads on the right hand side.

There’s no real “typical” for this type of dynamic rendering behavior. That’s a prime situation where browser behavior differs depending on things outside of any spec such as whether the browser loads dynamic scripts sequentially or in parallel, or executes JS before downloading stylesheets.

Another important takeaway from your comment is the issue from Jason’s comment in my previous post: “onload” doesn’t necessarily represent “page ready”. I responded to that and agree that onload is just a proxy and we need something better, but right now onload is the best thing we have.

Finally, your comment pointed out a bug in Loadtimer that exacerbates this issue. After the last URL is loaded, Loadtimer displays the alert dialog saying “Done” inside the iframe’s onload handler. This blocks other code from running – other code that could include the code to do the final rendering changes to the page. Again, since things like onload handler sequence is non-deterministic across browsers, it’s possible that Loadtimer’s alert fires sooner in iPad than Firefox and thus blocks more rendering. To fix this I’ve put the dialog in a setTimeout so that all the onload handlers in the iframe fire.

Great comment and thanks for the images – that really helped.

Daniel | 02-Dec-11 at 2:50 pm | Permalink |

+1 for inclusion of Opera Mobile (with/without Turbo) & Opera Mini – would be more “apples and apples”.

Craig Hyde | 06-Dec-11 at 2:41 pm | Permalink |

@Steve: hopefully I’ll be able to try it with a Fire after xmas!